CHAOS ENGINEERING

The demo works perfectly.

The model responds intelligently.

The chatbot feels magical.

The executive nods. Funding gets approved.

Then production begins.

And that’s where reality starts asking harder questions.

AI systems rarely fail because the model is bad.

They fail because the system around the model wasn’t built for real life.

1️⃣ The Demo Is Controlled. Production Is Not.

In a demo, the data is clean.

The inputs are curated.

The prompts are rehearsed.

In production, users type incomplete sentences, slang, sensitive information, unexpected formats, and sometimes pure nonsense.

Demos showcase model intelligence.

Production exposes input unpredictability.

Most AI systems are trained for capability — not resilience.

Break point: Unstructured, noisy, real-world input.

2️⃣ Accuracy ≠ Reliability

A model can score 92% accuracy in testing and still fail operationally.

Why?

Because production doesn’t measure “correct answers.”

It measures:

- Response time

- Latency under load

- Error handling

- Consistency across edge cases

- Integration reliability

Accuracy is a lab metric.

Reliability is a system metric.

And most teams optimize the former.

Break point: Infrastructure, scaling, and latency failures.

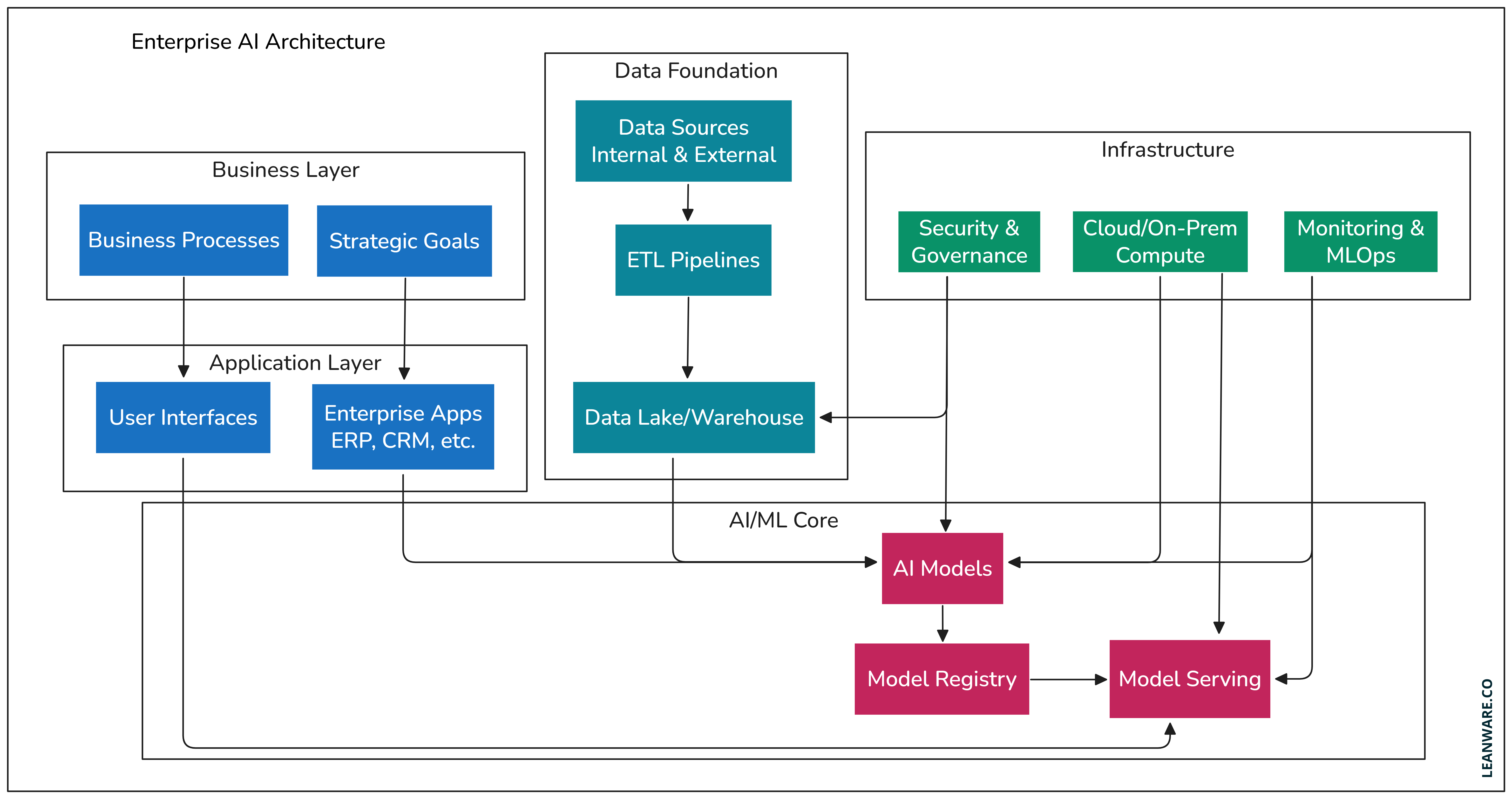

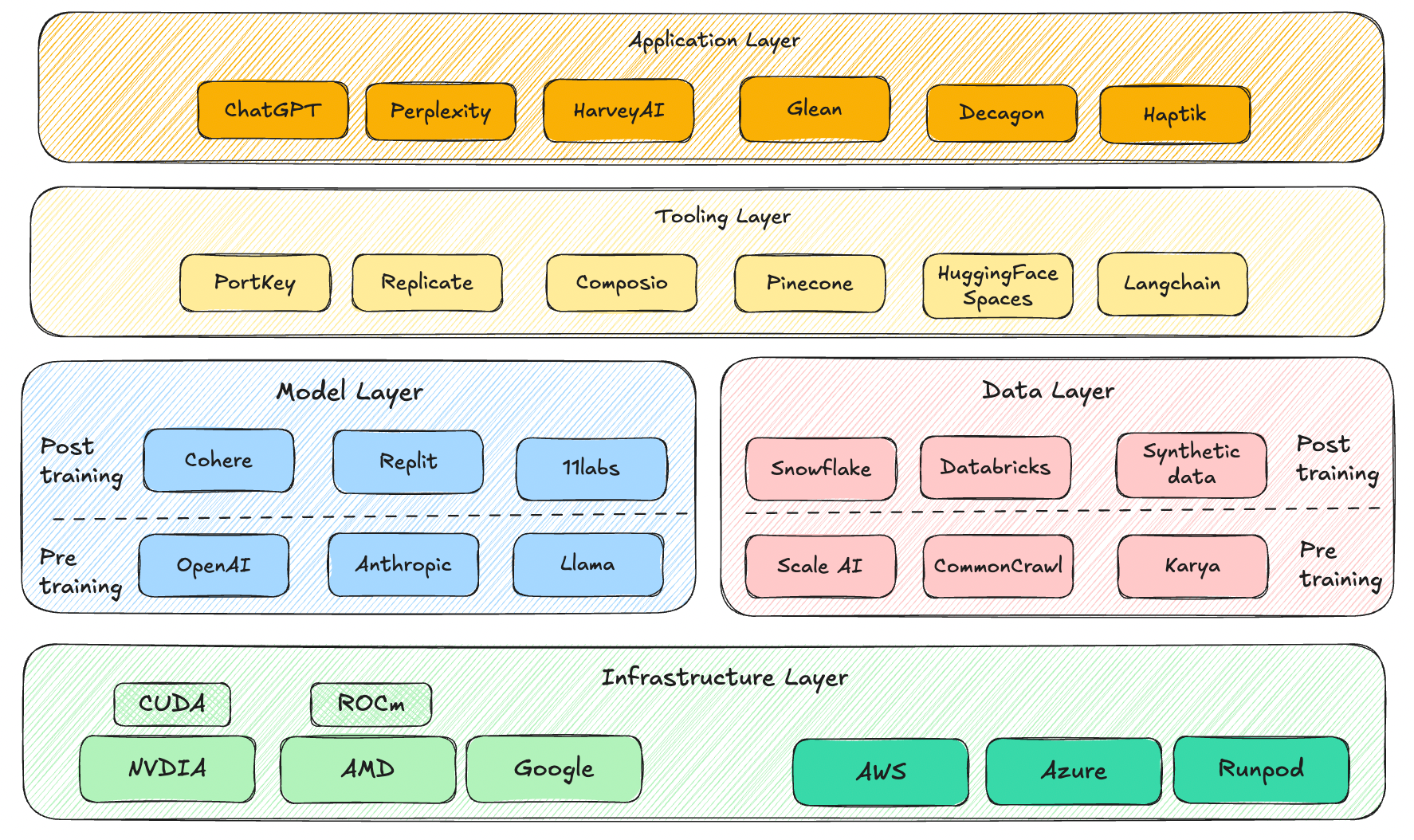

3️⃣ The Integration Gap

In demos, AI stands alone.

In production, AI must integrate with:

- Databases

- CRMs

- Payment systems

- Authentication layers

- Compliance workflows

- Logging & monitoring systems

The model isn’t the problem.

The integration surface is.

Every API call introduces delay.

Every external dependency introduces failure risk.

AI doesn’t break alone.

It breaks inside ecosystems.

Break point: System orchestration and dependency chains.

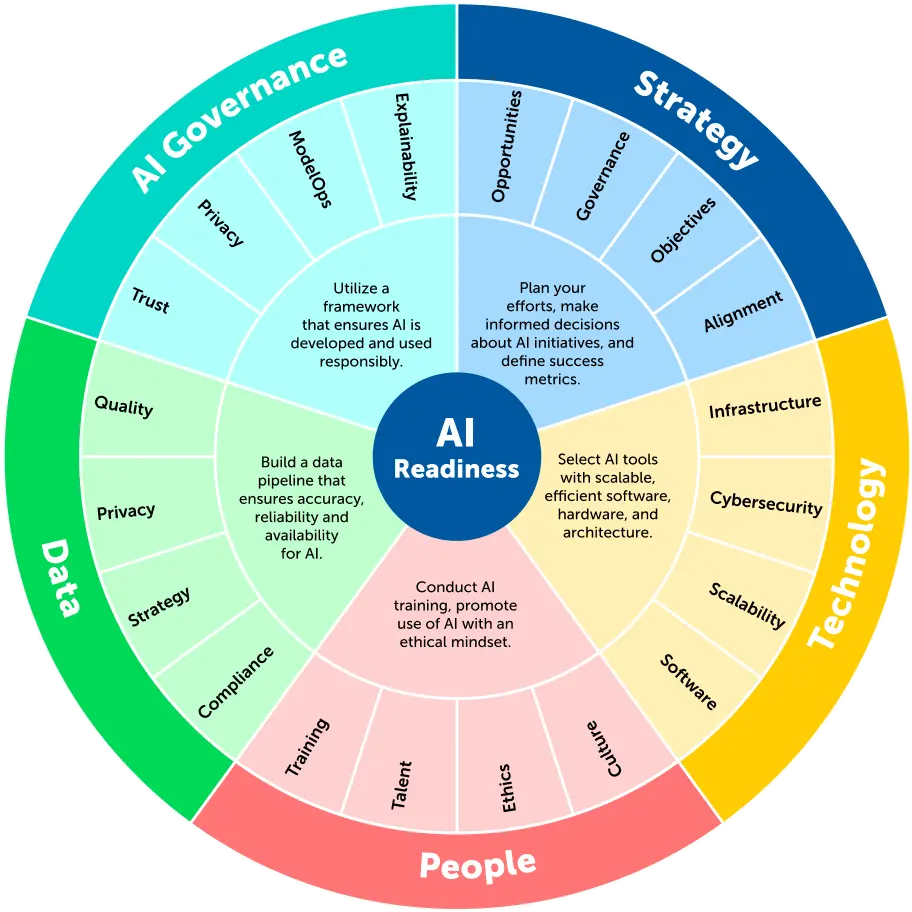

4️⃣ Hallucinations Become Business Risk

In a demo, hallucinations are amusing.

In production, they are liabilities.

If an AI:

- Generates incorrect financial advice

- Misroutes customer requests

- Produces fabricated policy information

The risk is no longer technical — it becomes legal and reputational.

Many AI pilots underestimate governance layers:

- Guardrails

- Retrieval constraints

- Validation checks

- Human-in-the-loop systems

Break point: Lack of safety architecture.

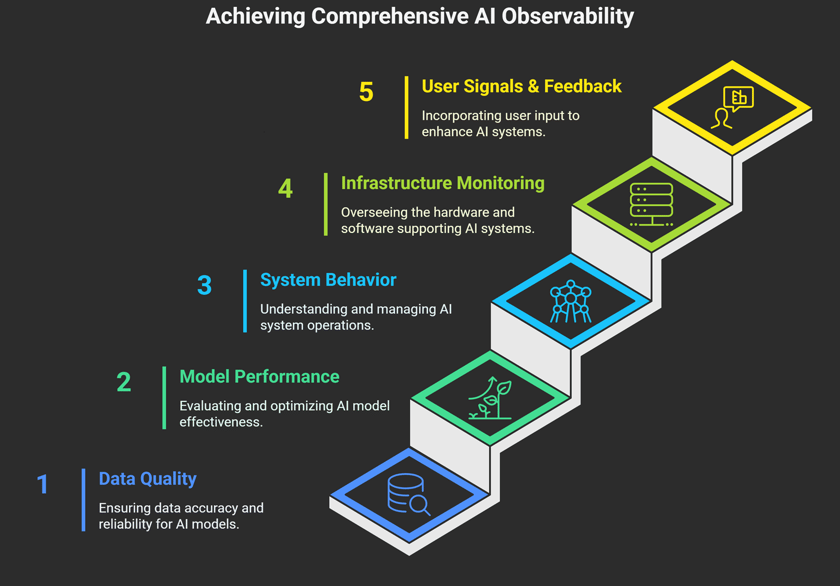

5️⃣ Monitoring Is an Afterthought

Traditional software monitoring tracks CPU, memory, errors.

AI needs additional signals:

- Model drift

- Prompt performance degradation

- Response quality trends

- Hallucination rate

- Bias shifts

Without observability, degradation goes unnoticed.

AI systems don’t crash loudly.

They slowly degrade.

Break point: No feedback loop after deployment.

6️⃣ Scaling Changes Behavior

A demo handles 10 requests.

Production handles 10,000.

Scaling changes:

- Latency expectations

- Cost models

- Memory consumption

- GPU utilization

- Queue behavior

Token limits, context windows, concurrency handling — these become operational realities.

An AI that feels “instant” in demo can feel unusable at scale.

Break point: Infrastructure and cost miscalculation.

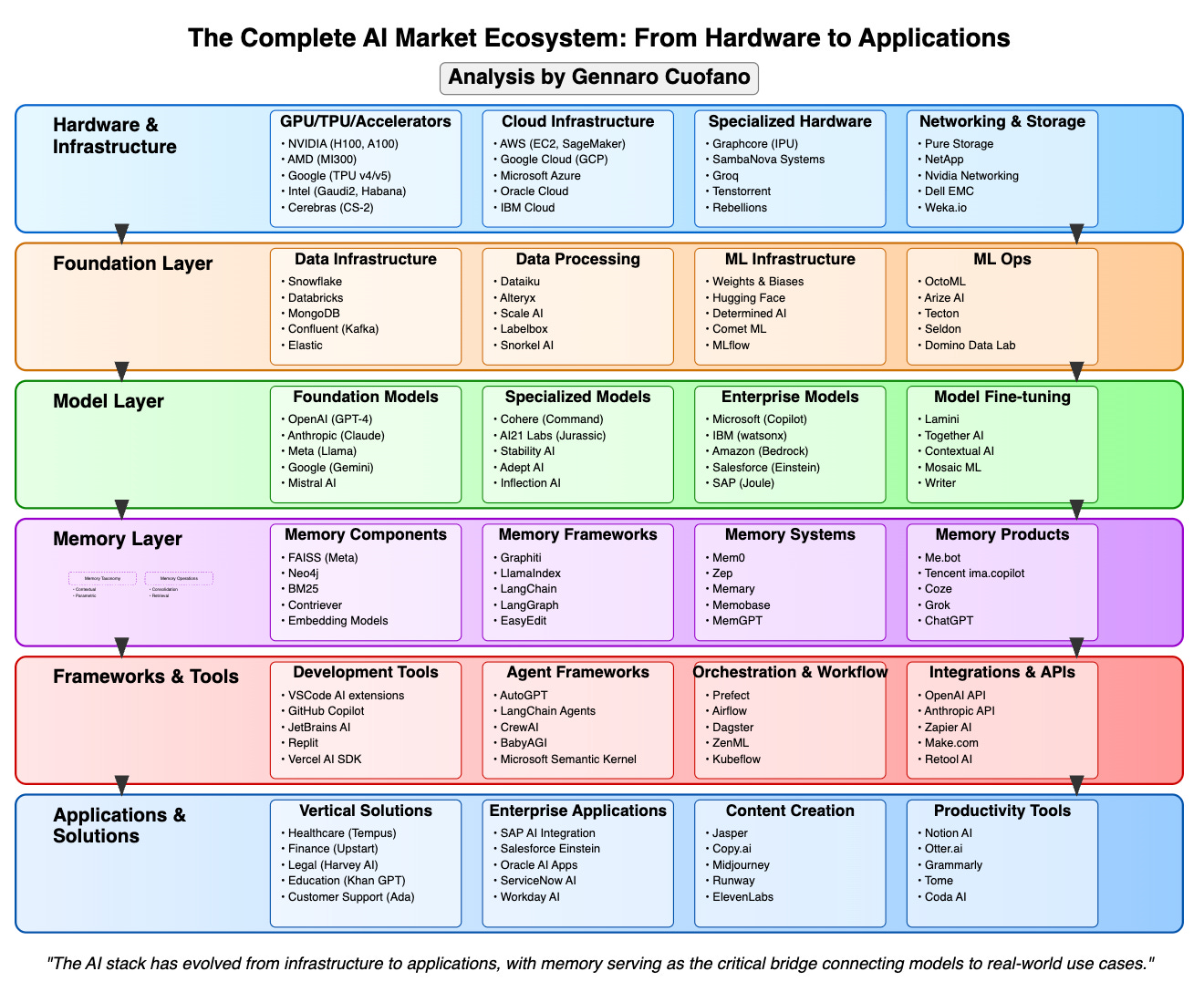

7️⃣ The Real Problem: AI Is a System, Not a Model

The biggest misconception in AI deployment:

The model is not the product.

The system is.

Production-ready AI requires:

- Prompt engineering

- Retrieval pipelines

- Guardrails

- Caching

- Observability

- Failover logic

- Governance

- Cost management

- Security controls

When teams demo the model but don’t architect the system, failure is delayed — not avoided.

Quantdig Framework: “AI Production Readiness Stack”

Layer 1 — Model Capability

Is the model intelligent enough?

Layer 2 — Input Resilience

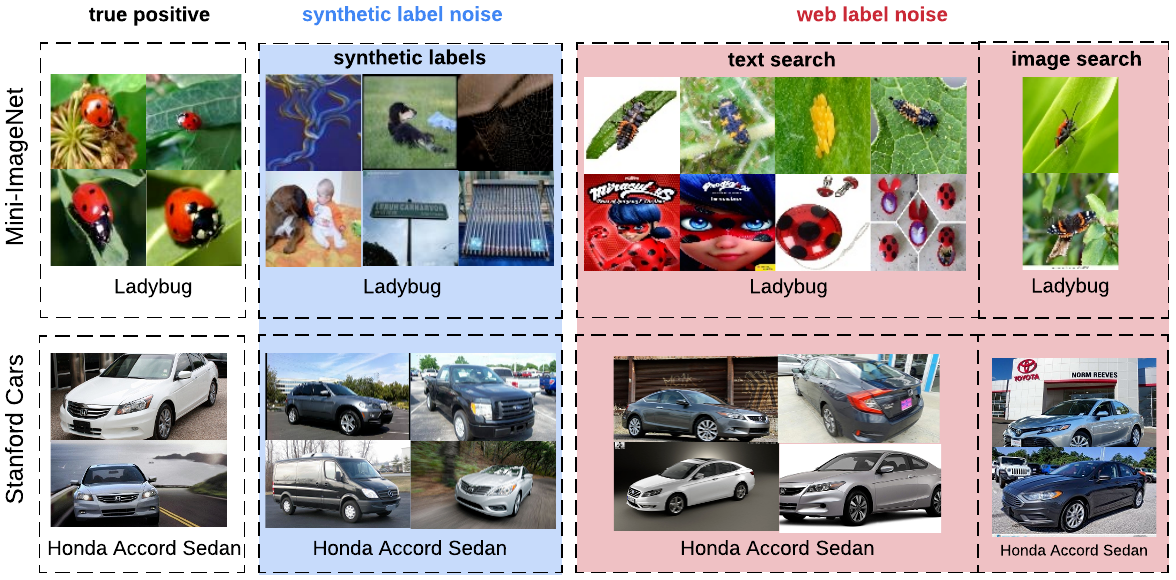

Can it handle messy real-world data?

Layer 3 — Integration Reliability

Does it survive API and dependency chains?

Layer 4 — Safety & Governance

Are hallucinations controlled?

Layer 5 — Observability

Can degradation be detected?

Layer 6 — Scalability & Cost Control

Can it handle real traffic sustainably?

Most AI failures don’t occur at Layer 1.

They occur above it.

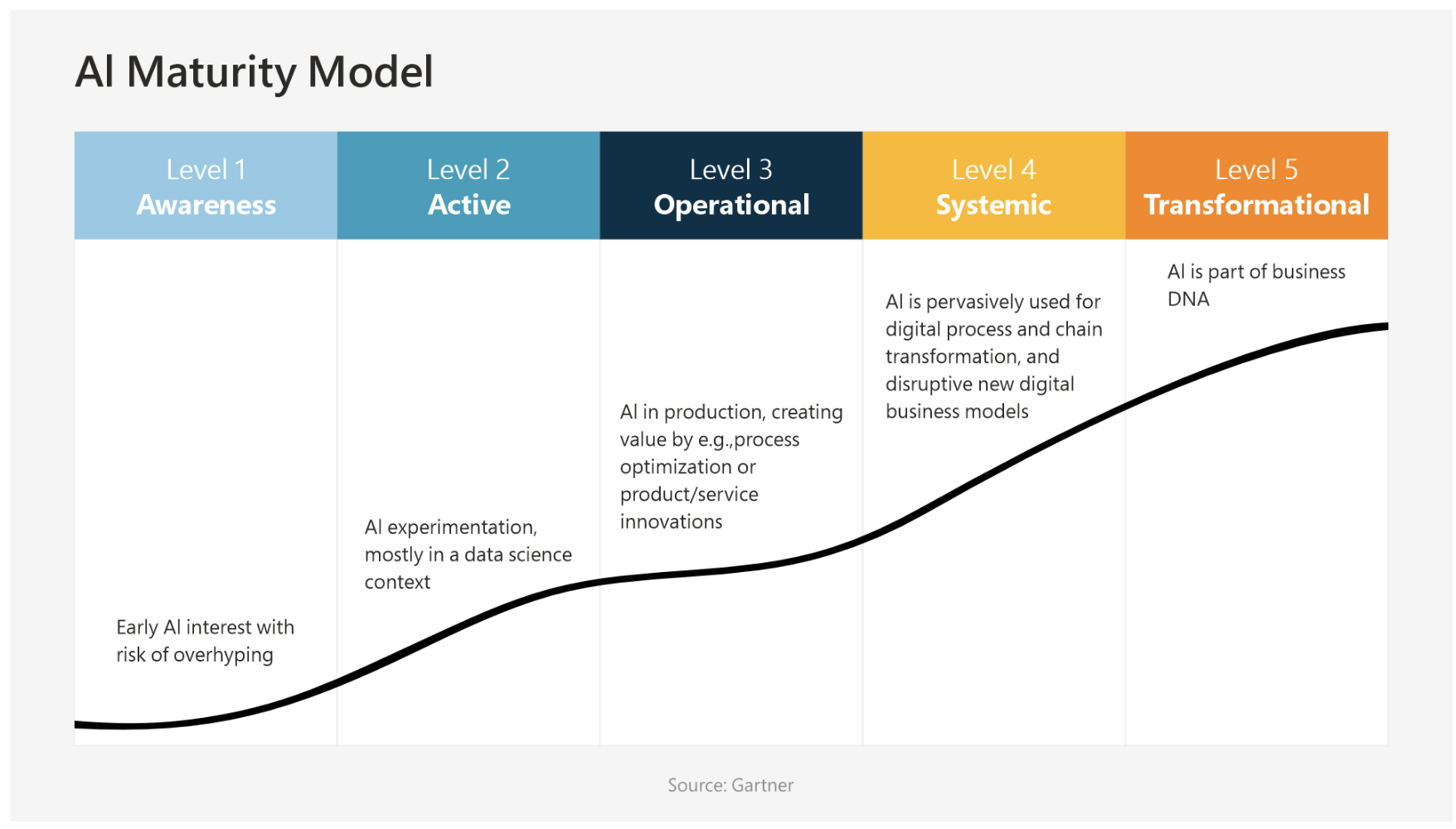

Final Thought

AI demos sell possibility.

Production tests discipline.

The companies that succeed with AI aren’t the ones with the smartest models.

They’re the ones that treat AI as infrastructure — not spectacle.